An interesting case of ‘enq: CR – block range reuse ckpt’, CKPT blocking user sessions …

Posted by FatDBA on May 2, 2022

Hi All,

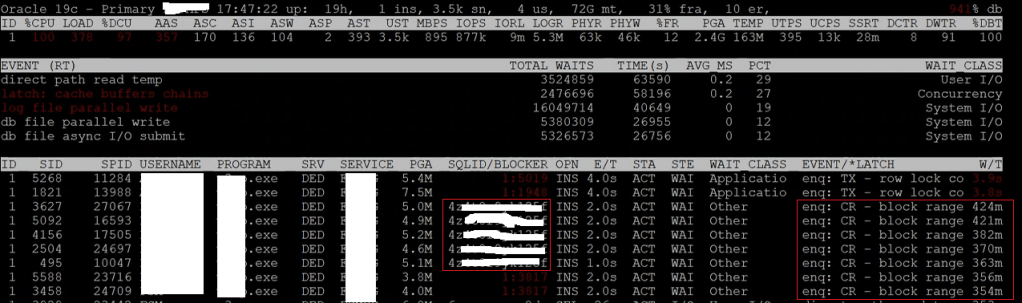

Last week we faced an interesting issue with one of the production system which was recently migrated from Oracle 12.2. to 19.15. The setup was running on a VMWare machine with limited resources. It all started when the application team started reporting slowness in their daily scheduled jobs and other ad-hoc operations, when checked at the database layer, it was all ‘enq: CR – block range reuse ckpt‘ wait event. Same can be seen in the below ORATOP output, where the BLOCKER ID 3817 is the CKPT or the checkpoint process.

The strange part was, the blocker was CKPT process and it was all for a particular SQL ID (an INSERT operation), see below in next oratop screen fix.

As far as this wait event (other classed), This comes just before you delete or truncate a table, where we need a level segment checkpoint. This is because it must maintain the consistency of the blocks there may be in the buffer memory and what’s on the disc. As per the definition, this wait event happens due to contention on blocks caused by multiple processes trying to update same blocks. This may seem issues from the application logic resulting into this concurrency bottleneck, but interestingly this was happening on a simple INSERT operation, not a DELETE or TRUNCATE.

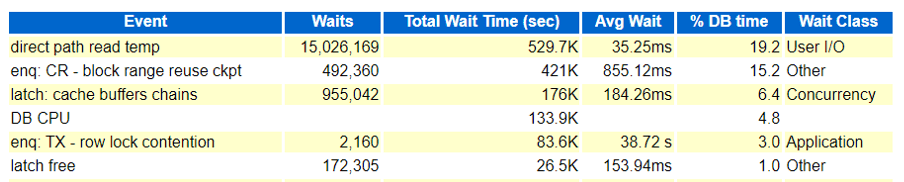

Same can be seen in the AWR and ASH reports too! There are CBC (Cache Buffer Chains) latching and latch free events too along with ‘enq: CR – block range reuse ckpt‘, but the initial focus was to understand the event and its reasons. As far as ‘direct path read temp‘ it was happening due to couple of SELECT statement which we resolved after attaching a better plan with the SQLs.

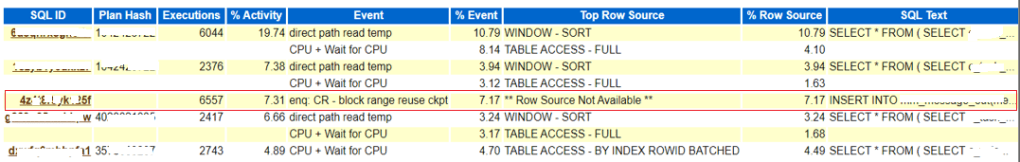

Wait event source (from ASH)

SQL Text was quite simple, an INSERT INTO statement.

INSERT INTO xx_xxx_xx(xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx) VALUES (xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx)

I’ve tried first with the CKPT process traces, just to see if there is anything interesting or useful caught within the process logs or traces. The trace was short and has only got some strange and obscure looking content, but which at least gave us an idea that the reuse block checkpoint of the RBR was failed due to enqueue, and its entry was failed due to abandoned parent. Still, that doesn’t helped us anything, we were unsure about the reason.

--> Info in CKPT trace file ---> XXXXX_ckpt_110528.trc.

RBR_CKPT: adding rbr ckpt failed for 65601 due to enqueue

RBR_CKPT: rbr mark the enqueue for 65601 as failed

RBR_CKPT: adding ckpt entry failed due to abandoned parent 0x1b57b4a88

RBR_CKPT: rbr mark the enqueue for 65601 as failed

There were few things logged in the alert.log, multiple deadlocks (ORA 0060), too many parse errors for one SELECT statement and some checkpoint incomplete errors (log switching was high >35)

-- deadlocks in alert log.

Errors in file /opt/u01/app/oracle/diag/rdbms/xxxxxx/xxxx/trace/xxxx_ora_73010.trc:

2022-04-19T13:41:22.489551+05:30 ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄

ORA-00060: Deadlock detected. See Note 60.1 at My Oracle Support for Troubleshooting ORA-60 Errors. More info in file /opt/u01/app/oracle/diag/rdbms/pwfmfsm/PWFMFSM/trace/PWFMFSM_ora_73010.trc.

-- From systemstatedumps

[Transaction Deadlock]

The following deadlock is not an ORACLE error. It is a ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄

deadlock due to user error in the design of an application

or from issuing incorrect ad-hoc SQL. The following

information may aid in determining the deadlock:

Deadlock graph:

------------Blocker(s)----------- ------------Waiter(s)------------

Resource Name process session holds waits serial process session holds waits serial

TX-03AC0014-0000B33A-00000000-00000000 1562 691 X 1632 946 5441 X 56143

TX-01460020-0001A5C2-00000000-00000000 946 5441 X 56143 1562 691 X 1632

-- too many parse errors

2022-04-19T13:57:26.261176+05:30

WARNING: too many parse errors, count=2965 SQL hash=0x8ce1e2ff ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄

PARSE ERROR: ospid=68036, error=942 for statement:

2022-04-19T13:57:26.272805+05:30

SELECT * FROM ( SELECT xxxxxxxxxxxxxxxxxxxxxx FROM xxxxxxxxxxxxxxxxxxxxxx AND xxxxxxxxxxxxxxxxxxxxxx ORDER BY xxxxxxxxxxxxxxxxxxxxxx ASC ) WHERE ROWNUM <= 750

Additional information: hd=0x546ba1be8 phd=0x61edab798 flg=0x20 cisid=113 sid=113 ciuid=113 uid=113 sqlid=ccmkzhy6f3srz

...Current username=xxxxxxxxxxxxxxxxxxxxxx

...Application: xxxxxxxxxxxxxxxxxxxxxx.exe Action:

-- Checkpoint incomplete

2022-04-19T15:03:16.964470+05:30 ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄

Thread 1 cannot allocate new log, sequence 186456

Checkpoint not complete

Current log# 11 seq# 186455 mem# 0: +ONLINE_REDO/xxxxxxxxxxxxxxxxxxxxxx/ONLINELOG/group_11.256.1087565999

2022-04-19T15:03:17.785113+05:30

But the alert log was not sufficient to give us any concrete evidences or reasons for CKPT bloking user sessions. So, next we decided to generate the HANGANALYZE and SYSTEMSTATEDUMPs to understand what’s all happening under the hood, through its wait chains. We noticed few interesting things there

- Wait chain 1 where a session was waiting on ‘buffer busy waits‘ while doing the “bitmapped file space header” which talks about the space management blocks (nothing to with bitmap indexes) and was related with one SELECT statement.

- Wait chain 2 where a session was found waiting on ‘enq: CR – block range reuse ckpt‘ event and was blocked by CKPT process (3817) which was further waiting on ‘control file sequential read‘

- Wait chain 4 where SID 1670, was found waiting on ‘buffer busy waits‘ while doing ‘bitmapped file space header’ operations.

Chain 1:

-------------------------------------------------------------------------------

Oracle session identified by:

{

instance: 1 (kpkpkpkpk.kpkpkpkpk)

os id: 46794

process id: 2014, oracle@monkeymachine.prod.fdt.swedish.se

session id: 15

session serial #: 19322

module name: 0 (xxxx.exe)

}

is waiting for 'buffer busy waits' with wait info:

{

p1: 'file#'=0xca

p2: 'block#'=0x2

p3: 'class#'=0xd ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄ bitmapped file space header

time in wait: 2 min 20 sec

timeout after: never

wait id: 10224

blocking: 0 sessions

current sql_id: 1835193535

current sql: SELECT * FROM ( SELECT xxxxx FROM task JOIN request ON xxxxx = xxxxx JOIN xxxxx ON xxxxx = xxxxx JOIN c_task_assignment_view ON xxxxx = xxxxx

.

.

.

and is blocked by

=> Oracle session identified by:

{

instance: 1 (kpkpkpkpk.kpkpkpkpk)

os id: 23090

process id: 3231, oracle@monkeymachine.prod.fdt.swedish.se

session id: 261

session serial #: 39729

module name: 0 (xxxx.exe)

}

which is waiting for 'buffer busy waits' with wait info:

{

p1: 'file#'=0xca

p2: 'block#'=0x2

p3: 'class#'=0xd ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄ bitmapped file space header

time in wait: 18.999227 sec ◄◄◄

timeout after: never

wait id: 47356

blocking: 25 sessions ◄◄◄

current sql_id: 0

current sql: <none>

short stack: ksedsts()+426<-ksdxfstk()+58<-ksdxcb()+872<-sspuser()+223<-__sighandler()<-read()+14<-snttread()+16<-nttfprd()+354<-nsbasic_brc()+399<-nioqrc()+438<-opikndf2()+999<-opitsk()+910<-opiino()+936<-opiodr()+1202<-opidrv()+1094<-sou2o()+165<-opimai_real()+422<-ssthrdmain()+417<-main()+256<-__libc_start_main()+245

wait history:

* time between current wait and wait #1: 0.000084 sec

1. event: 'SQL*Net message from client'

time waited: 0.008136 sec

wait id: 47355 p1: 'driver id'=0x28444553

p2: '#bytes'=0x1

* time between wait #1 and #2: 0.000043 sec

2. event: 'SQL*Net message to client'

time waited: 0.000002 sec

wait id: 47354 p1: 'driver id'=0x28444553

p2: '#bytes'=0x1

* time between wait #2 and #3: 0.000093 sec

3. event: 'SQL*Net message from client'

time waited: 2.281674 sec

wait id: 47353 p1: 'driver id'=0x28444553

p2: '#bytes'=0x1

}

and may or may not be blocked by another session.

.

.

.

Chain 2:

-------------------------------------------------------------------------------

Oracle session identified by:

{

instance: 1 (kpkpkpkpk.kpkpkpkpk)

os id: 10122

process id: 2850, oracle@monkeymachine.prod.fdt.swedish.se

session id: 97

session serial #: 47697

module name: 0 (xxxx.exe)

}

is waiting for 'enq: CR - block range reuse ckpt' with wait info: ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄

{

p1: 'name|mode'=0x43520006

p2: '2'=0x10b22

p3: '0'=0x1

time in wait: 2.018004 sec

timeout after: never

wait id: 81594

blocking: 0 sessions

current sql_id: 1335044282

current sql: <none>

.

.

.

and is blocked by

=> Oracle session identified by:

{

instance: 1 (kpkpkpkpk.kpkpkpkpk)

os id: 110528

process id: 24, oracle@monkeymachine.prod.fdt.swedish.se

session id: 3817 ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄ CKPT background process

session serial #: 46215

}

which is waiting for 'control file sequential read' with wait info: ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄

{

p1: 'file#'=0x0

p2: 'block#'=0x11e

p3: 'blocks'=0x1

px1: 'disk number'=0x4

px2: 'au'=0x34

px3: 'offset'=0x98000

time in wait: 0.273981 sec

timeout after: never

wait id: 17482450

blocking: 45 sessions ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄

current sql_id: 0

current sql: <none>

short stack: ksedsts()+426<-ksdxfstk()+58<-ksdxcb()+872<-sspuser()+223<-__sighandler()<-semtimedop()+10<-skgpwwait()+192<-ksliwat()+2199<-kslwaitctx()+205<-ksarcv()+320<-ksbabs()+602<-ksbrdp()+1167<-opirip()+541<-opidrv()+581<-sou2o()+165<-opimai_real()+173<-ssthrdmain()+417<-main()+256<-__libc_start_main()+245

wait history:

* time between current wait and wait #1: 0.000012 sec

1. event: 'control file sequential read'

time waited: 0.005831 sec

wait id: 17482449 p1: 'file#'=0x0

p2: 'block#'=0x11

p3: 'blocks'=0x1

* time between wait #1 and #2: 0.000012 sec

2. event: 'control file sequential read'

time waited: 0.011667 sec

wait id: 17482448 p1: 'file#'=0x0

p2: 'block#'=0xf

p3: 'blocks'=0x1

* time between wait #2 and #3: 0.000017 sec

3. event: 'control file sequential read'

time waited: 0.009160 sec

wait id: 17482447 p1: 'file#'=0x0

p2: 'block#'=0x1

p3: 'blocks'=0x1

}

.

.

.

Chain 4:

-------------------------------------------------------------------------------

Oracle session identified by:

{

instance: 1 (kpkpkpkpk.kpkpkpkpk)

os id: 46479

process id: 1036, oracle@monkeymachine.prod.fdt.swedish.se

session id: 1670

session serial #: 6238

module name: 0 (xxxx.exe)

}

is waiting for 'buffer busy waits' with wait info:

{

p1: 'file#'=0xca

p2: 'block#'=0x2

p3: 'class#'=0xd ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄ bitmapped file space header

time in wait: 18.954206 sec ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄

timeout after: never

wait id: 20681

blocking: 0 sessions

current sql_id: 343919375

current sql: SELECT * FROM ( SELECT xxxxx FROM task JOIN request ON xxxxx = xxxxx JOIN xxxxx ON xxxxx = xxxxx JOIN c_task_assignment_view ON xxxxx = xxxxx

.

.

.

and is blocked by

=> Oracle session identified by:

{

instance: 1 (kpkpkpkpk.kpkpkpkpk)

os id: 44958

process id: 523, oracle@monkeymachine.prod.fdt.swedish.se

session id: 4681

session serial #: 41996

module name: 0 (xxxx.exemonkeymachine.prod.fdt.swedish.se (TNS)

}

which is waiting for 'buffer busy waits' with wait info: ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄

{

p1: 'file#'=0xca

p2: 'block#'=0x2

p3: 'class#'=0xd ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄ bitmapped file space header

time in wait: 18.959429 sec ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄

timeout after: never

wait id: 153995

blocking: 101 sessions ◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄◄

current sql_id: 343919375

current sql: INSERT INTO xx_xxx_xx(xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx) VALUES (xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx, xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx)

short stack: ksedsts()+426<-ksdxfstk()+58<-ksdxcb()+872<-sspuser()+223<-__sighandler()<-semtimedop()+10<-skgpwwait()+192<-ksliwat()+2199<-kslwaitctx()+205<-ksqcmi()+21656<-ksqcnv()+809<-ksqcov()+95<-kcbrbr_int()+2476<-kcbrbr()+47<-ktslagsp()+2672<-ktslagsp_main()+945<-kdliAllocCache()+37452<-kdliAllocBlocks()+1342<-kdliAllocChunks()+471<-kdliWriteInit()+1249<-kdliWriteV()+967<-kdli_fwritev()+904<-kdlxNXWrite()+577<-kdlx_write()+754<-kdlxdup_write1()+726<-kdlwWriteCallbackOld_pga()+1982<-kdlw_write()+1321<-kdld_write()+410<-kdl

wait history:

* time between current wait and wait #1: 0.756195 sec

1. event: 'direct path write temp'

time waited: 0.406543 sec

wait id: 153994 p1: 'file number'=0xc9

p2: 'first dba'=0xb28fc

p3: 'block cnt'=0x4

* time between wait #1 and #2: 0.000001 sec

2. event: 'ASM IO for non-blocking poll'

time waited: 0.000000 sec

wait id: 153993 p1: 'count'=0x4

p2: 'where'=0x2

p3: 'timeout'=0x0

* time between wait #2 and #3: 0.000002 sec

3. event: 'ASM IO for non-blocking poll'

time waited: 0.000001 sec

wait id: 153992 p1: 'count'=0x4

p2: 'where'=0x2

p3: 'timeout'=0x0

}

and may or may not be blocked by another session.

.

.

Though, we wanted to try couple of hidden parameters to enable fast object level truncation and checkpointing, as they had helped us a lot in the past in similar scenarios, but had to involve Oracle support and after carefully analyzing the situation, they too agreed and want us to try them as they started suspecting it as an aftermath of a known bug of 19c.

[oracle@oracleontario ~]$ !sql

sqlplus / as sysdba

SQL*Plus: Release 19.0.0.0.0 - Production on Sun Apr 24 00:24:47 2022

Version 19.15.0.0.0

Copyright (c) 1982, 2022, Oracle. All rights reserved.

Connected to:

Oracle Database 19c Enterprise Edition Release 19.0.0.0.0 - Production

Version 19.15.0.0.0

SQL>

SQL> @hidden

Enter value for param: db_fast_obj_truncate

old 5: and a.ksppinm like '%¶m%'

new 5: and a.ksppinm like '%db_fast_obj_truncate%'

Parameter Session Value Instance Value descr

--------------------------------------------- ------------------------- ------------------------- ------------------------------------------------------------

_db_fast_obj_truncate TRUE TRUE enable fast object truncate

_db_fast_obj_ckpt TRUE TRUE enable fast object checkpoint

SQL> ALTER SYSTEM SET "_db_fast_obj_truncate" = false sid = '*';

System altered.

SQL>

SQL> ALTER SYSTEM SET "_db_fast_obj_ckpt" = false sid = '*';

System altered.

SQL>

And soon after setting them, we saw a drastic drop in the waits and system seemed better, much better. But it all happened during an off-peak hour, so there wasn’t much of a workload to see anything odd.

And as we suspected, the issue repeated itself, and next day during peak business hours we started seeing the same issue, same set of events back into existence. This time the ‘latch: cache buffers chains‘ was quite high and prominent which was not that much earlier.

Initially we tried to fix some of the expensive statements on ‘logical IOs’ or memory reads, but that hardly helped. The issue persisted even after setting a higher value for db_block_hash_latches and decreasing cursor_db_buffers_pinned. AWR continues to show ‘latch: cache buffers chains’ in the top ten, foreground timed events, and ‘latch free‘ in first place in the background timed events.

Oracle support confirmed the behavior was due to published bug 33025005 where excessive Latch CBC waits were seen after upgrading from 12c to 19c, and suggested to apply patch 33025005 and then to set hidden parameter “_post_multiple_shared_waiters” to value FALSE (in MEMORY only to test) which disables multiple shared waiters in the database.

-- After applying patch 33025005

SQL> ALTER SYSTEM SET "_post_multiple_shared_waiters" = FALSE SCOPE = MEMORY;

System altered.

And even after applying the patch and setting the recommended undocumented parameter, the issue persisted and we were totally clueless.

And as a last resort, we tried to flush the buffer cache of the database, and bingo that crude method of purging the cache helped to drastically to reduce the load on ‘CBC Latching‘ and for ‘enq: cr block range reuse ckpt‘, and the system ran fine soon after the flush of the DB Buffer cache.

So, nothing worked for us, we changed multiple checkpointing and shared writers related parameters in the database, applied a bug fix patch (33025005), but of no use. Finally, the flush of buffer cache worked for us! Oracle support agreed that this was happening due to a new/unpublished bug (33125873 or 31844316) which is not yet fixed in 19.15 and will be included in 23.1, and they are in status 11 that means still being worked by Development so there is no fix for it yet.

Hope It Helped!

Prashant Dixit

Neha said

We are hitting same issue in our environment . Support advised us to Apply this patch

Please apply patch 33987170 and check the result (Bug 33990590 – DATABASE HANG ON LGWR – MAR 23 INCIDENT (Base bug 33987170 – CHECKPOINT INCOMPLETE HANG PLUS ORA-00700 [KCBBXSV_NOWRITESBUF]).)

FatDBA said

Hi Neha

Thanks for reading the post. Yes, each bug behaves differently. In our case it was something that wasn’t recorded/registered and was declared a new bug where development has started by Oracle support. Though the flush of buffer cache and a patch for a different observation worked.

Your case is little different than ours due to a different code path and processes involved.

Please keep me posted about the progress. My email is prashantdixit@fatdba.com

Charly said

We are hitting de same bug. Thanks for the information, I appreciate it.

saketkumarsitamarhi said

Thanks Prashant

Really it’s a Good Information. It will definitely help us while troubleshooting of performance issue.

I would request please post About the HANGANALYZE and SYSTEMSTATEDUMP

FatDBA said

Thank you Saket!

I have already posted on SYSTEMSTATEDUMP in the past. Below is the direct link to it.

For HANGANALYZE, I have made another post

Chungtc said

HI, we may have the same symptom, many wait “enq: CR – block range reuse ckpt”, “reliable message”

so Have you haven the solution?

Chung said

Hi we have the same problem ! Have you got the final solution from Oracle Support? Can you please share it?

FatDBA said

Hi Chung,

Yes, the solution is there at the end of the post where we fixed issues after setting few hidden/undocumented parameters.